Every Monday, your growth team opens the same spreadsheet. They pull CPI from Meta, install counts from Google, and subscriber numbers from RevenueCat -- then spend an hour trying to figure out why nothing adds up. CPI looks great. Installs are climbing. But cost-per-subscriber keeps getting worse, and no one can explain why.

Creative fatigue and targeting mistakes are real -- but there is a silent tax underneath all of them: what you are telling Meta and Google to optimize for. Every conversion signal you send teaches the algorithm what a "good user" looks like. If those signals include impulse cancellers, trial-only users, and unverified installs, the algorithm learns the wrong lesson -- and spends your budget finding more of the same.

Key Takeaways

Meta and Google do not decide which users to show your ads to randomly. They use the conversion signals you send -- installs, trial starts, purchases -- to build a profile of your ideal user. Then they find more people who match that profile.

When the signals are wrong, the targeting is wrong. And for subscription apps, the signals are almost always wrong.

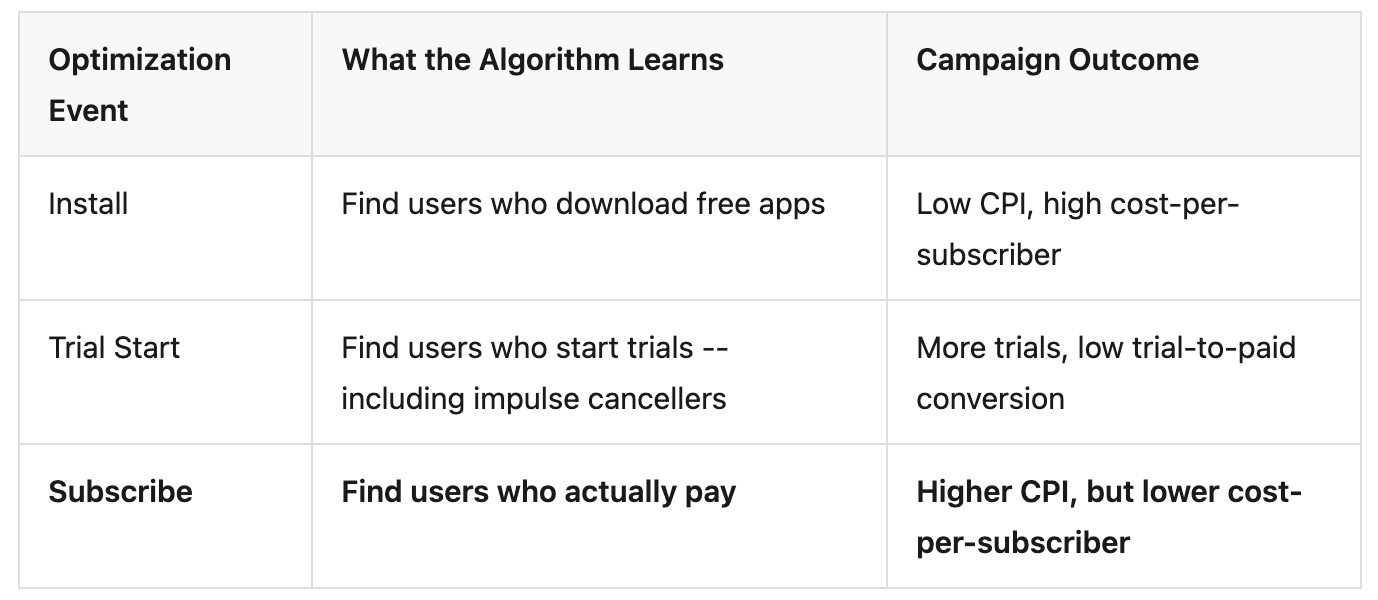

Most subscription apps optimize for Install events because installs fire immediately and generate enough volume for the algorithm's learning phase -- Meta recommends approximately 50 optimization events per ad set to exit the learning phase. The optimization event you choose determines what the algorithm learns:

Subscription apps see a specific form of signal contamination that most other app categories do not: impulse cancellations.

A user taps "Start Free Trial," gets charged anxiety, and cancels within 10-15 minutes -- before opening the app a second time. That Subscribe event still reaches Meta or Google as a valid conversion. The algorithm treats this user as a success and builds lookalike models around them.

With many users cancelling right away, this pattern is common enough that optimizing for a "qualified trial" event has become a popular tactic -- firing the conversion signal only after users have stayed active for a few hours. Without this filtering, each optimization cycle targets slightly worse users over 4-8 weeks. The feedback loop is invisible from the ad platform dashboard -- CPI stays flat, trial starts look healthy, but cost-per-subscriber climbs steadily.

Dirty signals do not cause an immediate crash. They degrade the algorithm's signal-to-noise ratio over time.

Consider a hypothetical growth team spending $10K/month on Meta:

Total damage: months of degraded ad campaign performance from signal contamination that was never visible in the dashboard.

Subscription apps do not just have a signal quality problem. They have a structural problem: the conversion event that matters most is the hardest to send back to ad platforms.

Many subscription apps offer a 7-14 day free trial. If the attribution window is shorter than the trial period, the subscription event never links back to the ad that drove it. For users on a standard 7-day trial, the actual revenue event occurs outside the default optimization window of most ad platforms -- meaning these conversions are never attributed to the campaign that drove them.

When a user subscribes, the payment is processed by Apple or Google -- not by the app. Device-side SDKs can detect the subscription state change, but only when the user opens the app again and triggers a sync. If the user does not reopen, the signal is delayed or lost entirely. For real-time, independent signal transmission, the MMP needs a server-to-server connection -- infrastructure most small teams have not built.

RevenueCat knows exactly who subscribed, renewed, or churned. Meta and Google know nothing about subscription revenue. These two systems do not talk to each other natively in most setups. Growth teams fill the gap with weekly CSV exports -- a manual process that is far too slow for real-time ad campaign optimization and still produces numbers they do not fully trust.

This disconnect creates a measurement gap that compounds with every dollar spent. Without revenue data flowing back to ad platforms, campaigns cannot optimize for what actually drives the business.

The practice of choosing, filtering, and structuring conversion events to improve ad campaign optimization has a name: signal engineering (Sub Club / Thomas Petit). The core principle is simple: send the ad platform something better, and it will do a better job.

According to internal studies by Meta, TikTok, and LinkedIn, better signals can increase conversions by 24% and lower cost per action by 15%. For subscription apps, four changes make the biggest difference.

Switch your campaign optimization event from Install to Subscribe or Start Trial. If subscription volume is too low for the algorithm's learning phase, Start Trial is the next best proxy -- but only if impulse cancellations are filtered.

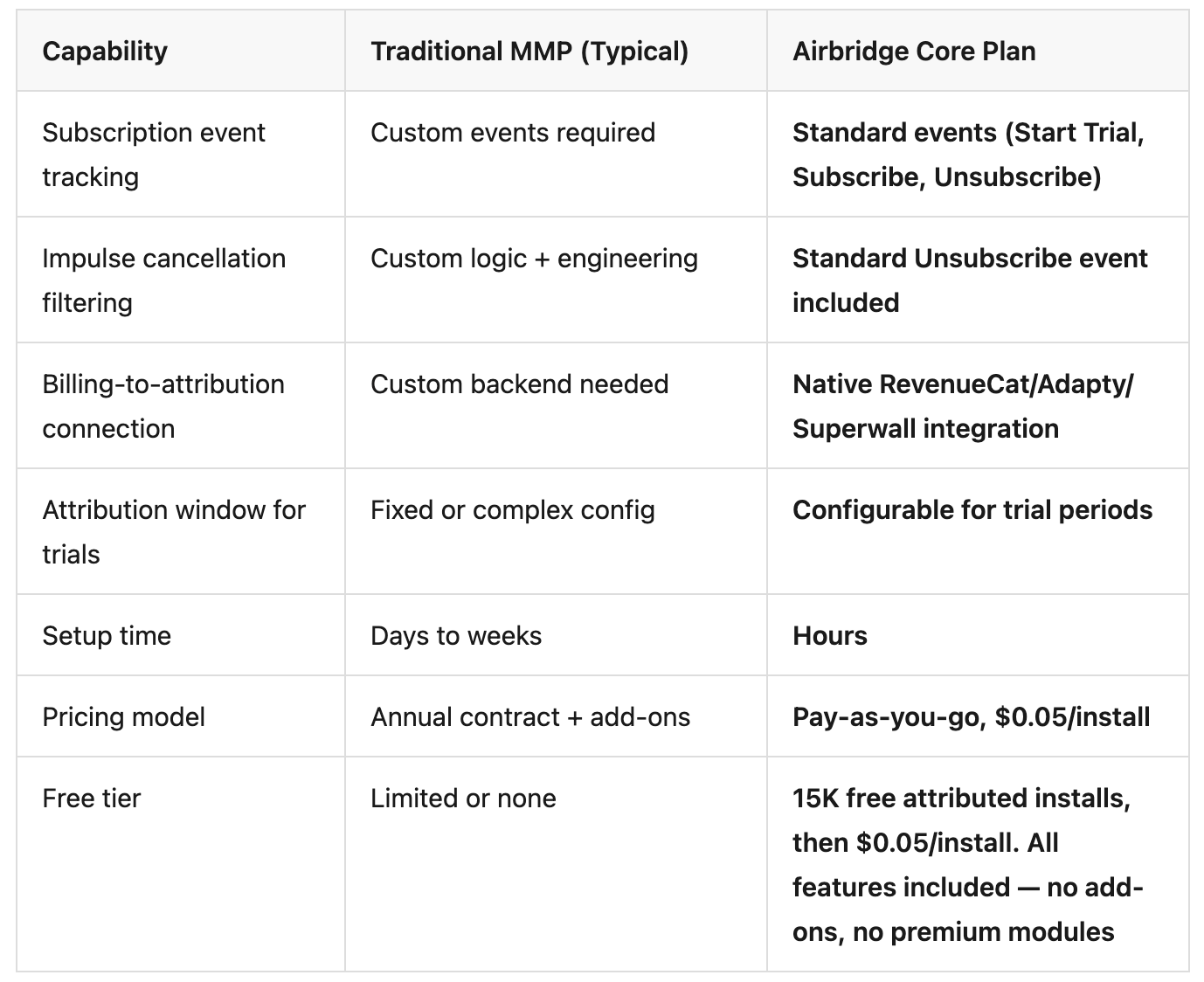

Airbridge Core Plan provides standard subscription events -- Start Trial, Subscribe, Unsubscribe, Order Complete, Order Cancel -- with native GMAT channel integrations that send these events to Google, Meta, Apple Search Ads, and TikTok without custom event engineering.

Define a "qualified trial" window -- a time threshold (e.g., 2-4 hours after trial start) before the conversion event fires to the ad platform. Users who cancel before the threshold are excluded from optimization signals.

Airbridge Core Plan tracks both Subscribe and Unsubscribe as standard events, so teams can build their qualified trial logic on top of verified data. RevenueCat, Adapty, and Superwall integration provides verified subscription status to distinguish genuine subscribers from impulse cancellers.

Build a server-to-server integration that matches subscription events to attributed installs and forwards them to ad platforms. Handle edge cases -- billing retries, grace periods, family sharing. Budget at least a week of engineering time.

Airbridge Core Plan's native RevenueCat, Adapty, and Superwall integration handles this automatically. Subscription events flow from your billing platform into the attribution system, where they inform ad campaign optimization across GMAT channels -- no custom backend needed.

Set your attribution window to exceed your longest trial period. If your trial is 14 days, configure a 21-30 day click-through window. Every subscription that falls outside the window is counted as organic -- hiding the true value of paid channels.

Airbridge Core Plan's Configurable Attribution Rules let teams set windows appropriate for subscription conversion cycles. SKAN Conversion Value Settings optimize iOS signal quality within Apple's privacy framework.

Traditional MMPs can handle these steps too -- but the setup cost and complexity differ significantly. Here is how Airbridge Core Plan compares.

Even with great creatives and precise targeting, dirty signals silently degrade every ad campaign over time. Your budget does not just buy impressions. It buys training data for Meta and Google's ML models. When that data includes impulse cancellations, install-only signals, and disconnected billing events, the algorithm learns to find more users who will never pay.

Cleaning your signals is not one optimization tactic among many -- it is the foundation that every other ad campaign optimization depends on.

Stop training your algorithm on the wrong users -- see which campaigns actually drive subscribers with 15K free attributed installs on Airbridge Core Plan.