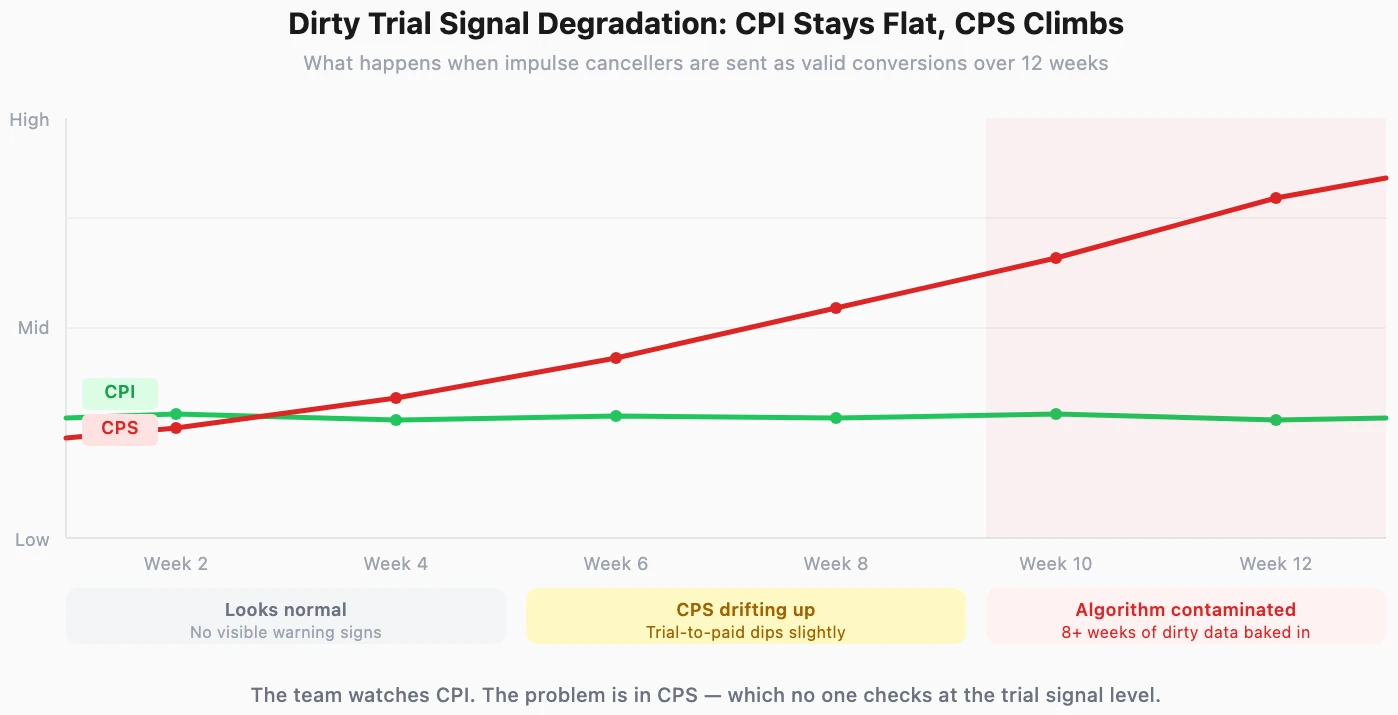

Your Meta campaign generated 1,200 trial starts last month. Your CPI held steady. Your trial volume hit target. Everything in the dashboard looks healthy.

But your cost per subscriber has been climbing steadily over the same period — and no one can explain why.

The problem is not your targeting, your creative, or your budget. It is the trial event itself. Every time a user taps "Start Free Trial" and cancels 10 minutes later, that event reaches Meta as a valid conversion. The algorithm learns from it. And it learns the wrong lesson.

Key Takeaways

The trial event is the most common conversion signal subscription apps send to ad platforms. It is fast — fires within seconds of user action. It is high-volume — generates enough data for algorithm learning. And for most fitness app teams, it is the only signal they send.

The problem: not all trials are equal, but the algorithm treats them as if they are.

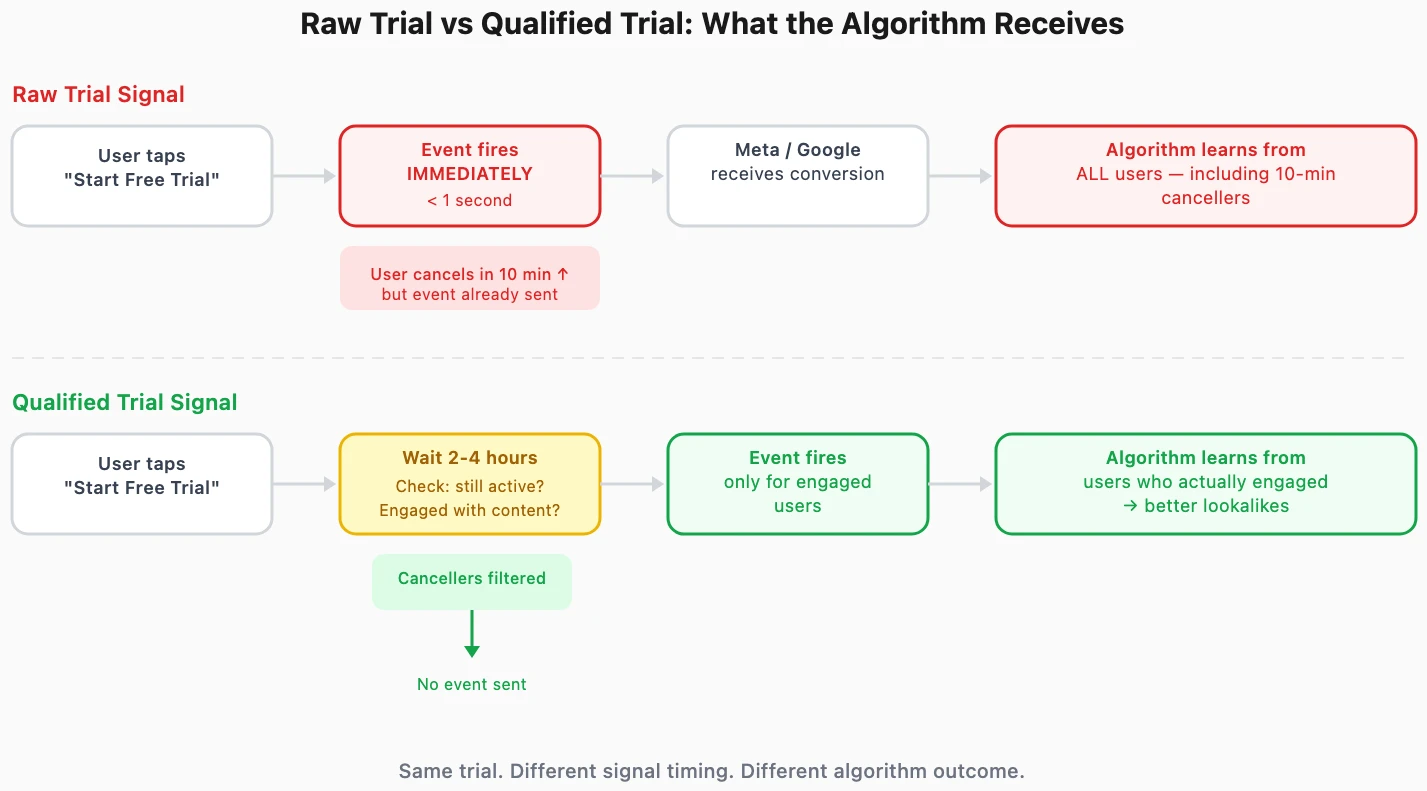

A user sees your fitness app ad on Instagram. They tap through, hit "Start Free Trial," see the subscription terms, and immediately cancel. All within 10 minutes.

They never opened the app a second time. They never started a workout. They never intended to pay.

But Meta received a Start Trial conversion event. From the algorithm's perspective, this user is a success. The lookalike model updates. The next delivery cycle targets more users with this same profile — impulse-driven, charge-anxious users who never convert to paid.

These dirty trial signals degrade algorithm quality invisibly:

The gap between trial intent and subscription intent is category-dependent. For a productivity app, a user who starts a trial probably needs the tool. For a fitness app, a user who starts a trial might just be having a motivation spike — not someone ready to commit to a $14.99/month workout plan.

Business of Apps reports that Health & Fitness apps have a trial-to-paid conversion rate of 35.0%, higher than the global average of 25.6%. But this median hides the variance within a single app's trial cohort. The top-converting trials come from users who engage immediately — completing onboarding, browsing workouts, setting goals. The impulse cancellers inflate the trial count without contributing to conversion.

For fitness apps, "Start Trial" is closer to a bookmark than a commitment. Users start trials:

Many cancel before the app finishes loading. Every one of these cancellations is a training signal telling the algorithm: find more users like this.

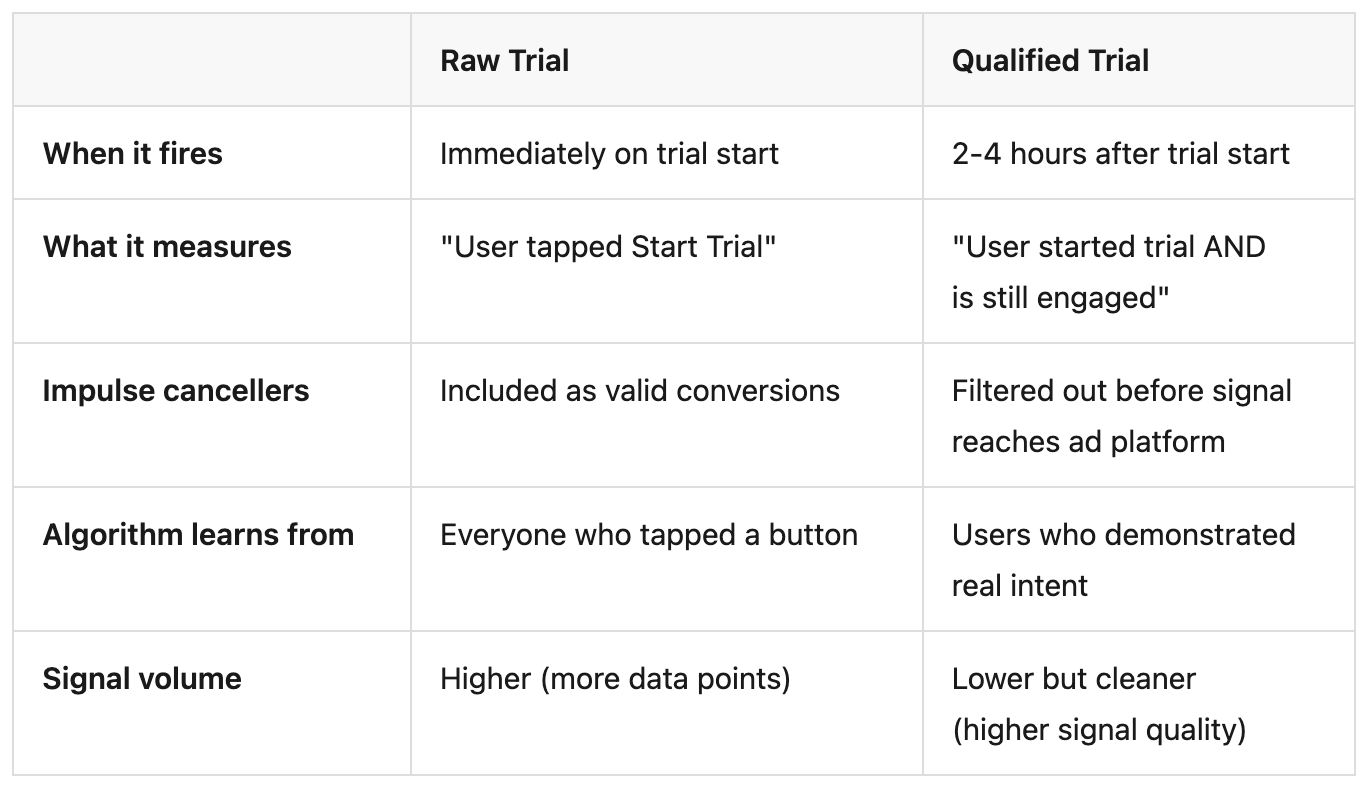

A qualified trial is a conversion event that fires not when the user starts a trial, but after the user demonstrates real engagement — typically 2-4 hours after trial start. It is the same trial, but the signal is delayed until the user passes a minimum engagement threshold.

The tradeoff is signal volume for signal quality. A qualified trial sends fewer conversion events to the ad platform — which means the algorithm has less data to learn from. But the data it does receive is accurate. For fitness apps, where a significant share of trial starts come from impulse users who never intended to pay, removing this noise dramatically improves who the algorithm targets.

When you send raw trial events, the algorithm builds a profile around every user who started a trial — including the 10-minute cancellers. When you send qualified trial events, the algorithm only sees users who engaged for 2+ hours. The profile shifts:

Meta and TikTok internal studies on trial signal optimization show that higher-quality conversion signals can increase actual conversions by 24% and lower cost per action by 15%. The improvement comes not from better targeting settings — but from better training data.

The algorithm is only as good as the signal you send it. For fitness apps, the difference between a raw trial signal and a qualified trial signal is the difference between "someone who tapped a button" and "someone who started their first workout."

Which channels are sending you impulse trialists? See trial-to-subscription rates by channel — free for your first 15K attributed installs.

Implementing qualified trials requires two decisions and one measurement system.

The qualification window is how long you wait before firing the conversion event. Industry standard is 2-4 hours, but the right window depends on your app:

The qualification criteria define what engagement looks like for your fitness app:

Start with the simplest criteria — trial still active after 3 hours — and iterate. Overly complex qualification logic adds engineering overhead without proportional signal quality improvement.

Implementing qualified trials without measuring the impact is guesswork. You need channel-level trial quality data — before the change and after — to validate whether your qualification criteria are actually improving CPS.

What to measure:

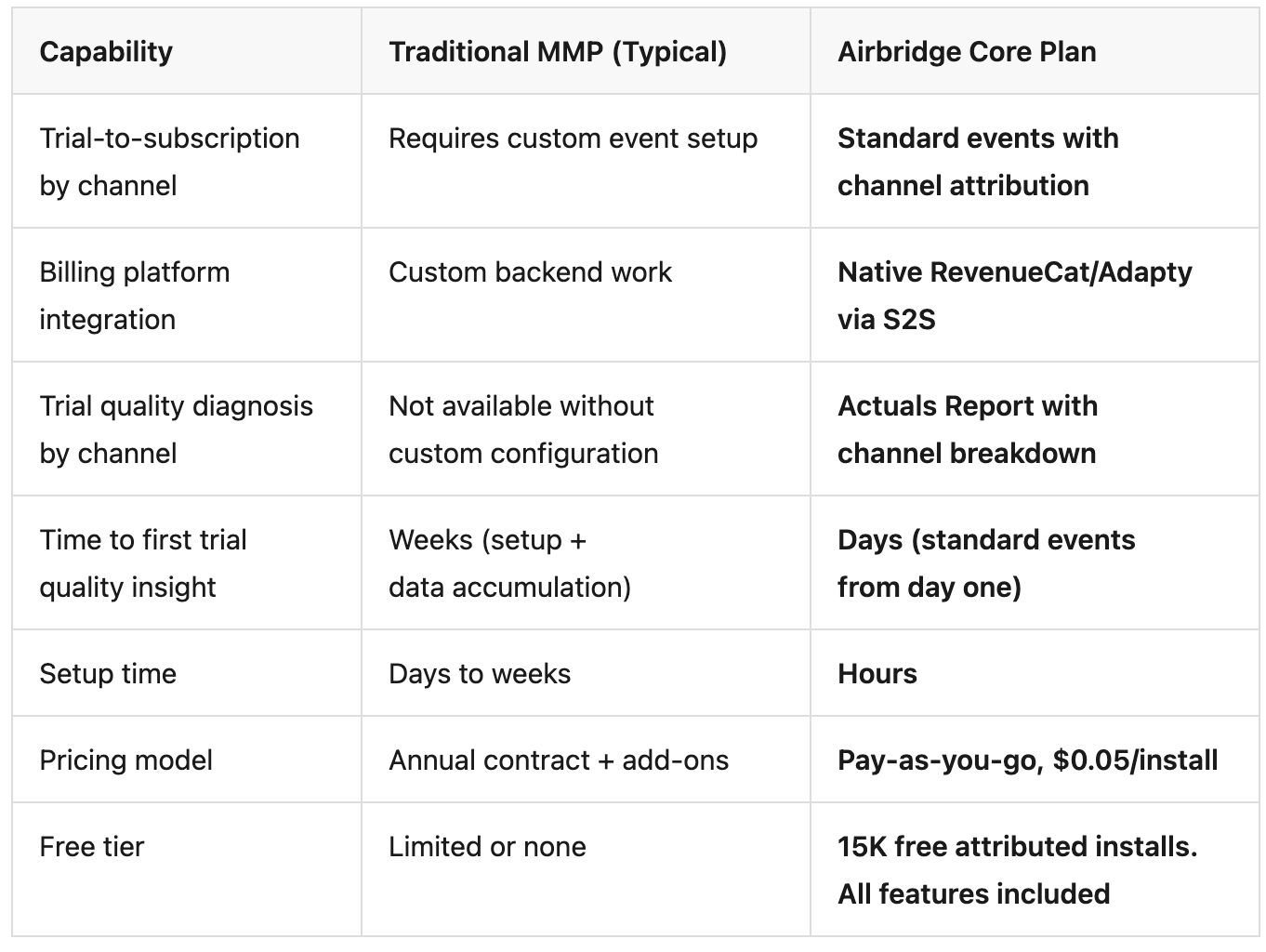

This measurement requires an attribution system that connects trial events to subscription outcomes at the channel level — which is where most fitness app teams hit a gap.

Implementing qualified trials is an app-level change — your app decides when to fire the event. But validating whether it works requires channel-level attribution data that connects trial starts to subscription outcomes. This is what Airbridge Core Plan provides.

Core Plan tracks Start Trial and Subscribe as standard events with attribution across Meta, Google, Apple Search Ads, and TikTok. The Actuals Report breaks down trial-to-subscription conversion rates by channel — so you can see which channels have the widest gap between trial starts and paid subscriptions.

With native RevenueCat and Adapty integration via S2S, subscription events flow into the attribution system automatically. Before implementing qualified trials, this data diagnoses the problem — which channels have the most dirty trial signals. After implementation, it validates the fix — whether CPS improved by channel.

The trial event you send to Meta and Google is not just a data point — it is a training signal that shapes who the algorithm targets next. For fitness apps, where the gap between "started a trial" and "willing to pay for a workout plan" is wide, raw trial signals systematically train the algorithm to find the wrong users.

Qualified trials fix the input. Channel-level trial quality measurement validates the output.

See which channels drive trials that convert — not trials that cancel in 10 minutes. Start with 15K free attributed installs on Airbridge Core Plan.